AI Native Medhavi Newsletter ·

Engineering Leaders Embrace AI, Claude Launches on AWS, and New Tools for Codex

AI Tools Empower Engineering Leaders

Top News

A recent survey of 219 engineering leaders revealed a mix of excitement and anxiety towards adopting AI technologies in their workflows. About 72% of respondents expressed enthusiasm about becoming AI-native, while 64% acknowledged challenges integrating AI into existing systems. The insights suggest that engineering teams are optimistic about the benefits of AI but are also concerned about the potential disruptions it may cause. Notably, 58% indicated a need for better training and resources to effectively implement AI tools.

Augment Code Blog

At Cosmos Conf 2026, Azure Cosmos DB's VP Kirill Gavrylyuk highlighted three key trends reshaping application architecture due to AI. AI is enabling developers to iterate faster, ship more frequently, and scale applications instantly, necessitating a shift to flexible, semi-structured data that is adaptive to changing needs. Additionally, semantic search and AI-based query mechanisms like vector and hybrid search are becoming essential features in modern applications. Overall, the conference emphasized the need for data platforms to evolve into systems of reasoning that support AI-driven applications.

Azure Blog

Anthropic has launched the Claude Platform on AWS, marking its general availability to AWS customers. This new deployment option allows users to access the Claude platform directly while utilizing AWS authentication, billing, and monitoring services. The integration aims to streamline deployment and enhance user experience through familiar AWS services.

InfoQ AI/ML/Data Engineering News

At the Red Hat Summit 2026, Microsoft and Red Hat emphasized the capabilities of Microsoft Azure Red Hat OpenShift in supporting AI workload modernization, enabling organizations like Banco Bradesco to transition from pilot projects to production-level systems. Banco Bradesco, one of Latin America's largest financial institutions, utilizes Azure Red Hat OpenShift to manage over 200 AI initiatives while ensuring governance and security through Azure services. The platform's integration helps facilitate consistent identity and policy management, showcasing its use in regulated environments, such as with the Akkuro lending platform by Topicus. Microsoft's recognition as the 2026 Platform Modernization Partner of the Year underscores the effectiveness of this collaboration in delivering enterprise-grade solutions.

Azure Blog

On May 13, 2026, Anthropic announced the launch of Claude specifically tailored for small businesses. This version of Claude aims to streamline tasks and enhance productivity by allowing users to automate workflows and generate content efficiently. The introduction is part of a broader initiative to make advanced AI tools more accessible to smaller enterprises, with a focus on affordability and user-friendliness. Additional features and pricing details are expected to be released soon as part of the ongoing rollout.

Anthropic News

Tools & Launches

Following the release of GPT 5.5, there is a noticeable increase in support for Codex among AI Engineers. Anthropic has introduced a new pricing structure for its Claude subscriptions, providing clients with API credits equal to their subscription cost. For example, a $200 subscription grants users $200 worth of API credits to use across various platforms, including programmatic usage of Claude AI. The general reception is cautiously optimistic, though many alternative platform users feel the changes haven't favored their needs adequately.

Latent Space

OpenAI has developed a secure sandbox for Codex on Windows, designed to enhance the safety and efficiency of coding agents. This sandbox implements controlled file access and network restrictions to prevent malicious activities while coding. The initiative aims to enable developers to harness Codex's capabilities in a more secure environment, ultimately improving productivity and reducing potential risks associated with AI-assisted coding.

OpenAI Blog

GitHub has introduced a new Enterprise Installation API, currently in public preview, that allows GitHub App developers to efficiently verify if their app is installed within an enterprise and obtain its installation ID. This API simplifies the process of acquiring installation tokens for enterprises by eliminating the need to paginate through a list of installations, thereby speeding up access for developers. The feature is a direct response to community feedback, particularly from GitHub Actions contributors and Enterprise Apps preview participants.

GitHub Changelog

The GitHub Changelog has announced a new Agent tasks REST API for Copilot Business and Copilot Enterprise users that is now available in public preview. This API allows users to programmatically start Copilot cloud agent tasks, which can execute code changes and create pull requests in the background. Key features include the ability to manage multiple repositories, automate release processes, and track task progress via the API. Authentication options include personal access tokens and OAuth tokens, with further support for GitHub App installation access tokens expected soon.

GitHub Changelog

The article introduces LangSmith Context Hub, designed to enhance the API integration capabilities of LangChain. The new hub enables developers to manage and orchestrate various contextual prompts, streamlining the workflow of AI application development. With this launch, LangChain aims to improve user experience by allowing for more efficient use of memory and state management in context handling. The Context Hub is positioned as a solution for companies looking to scale their AI deployments effectively.

LangChain Blog

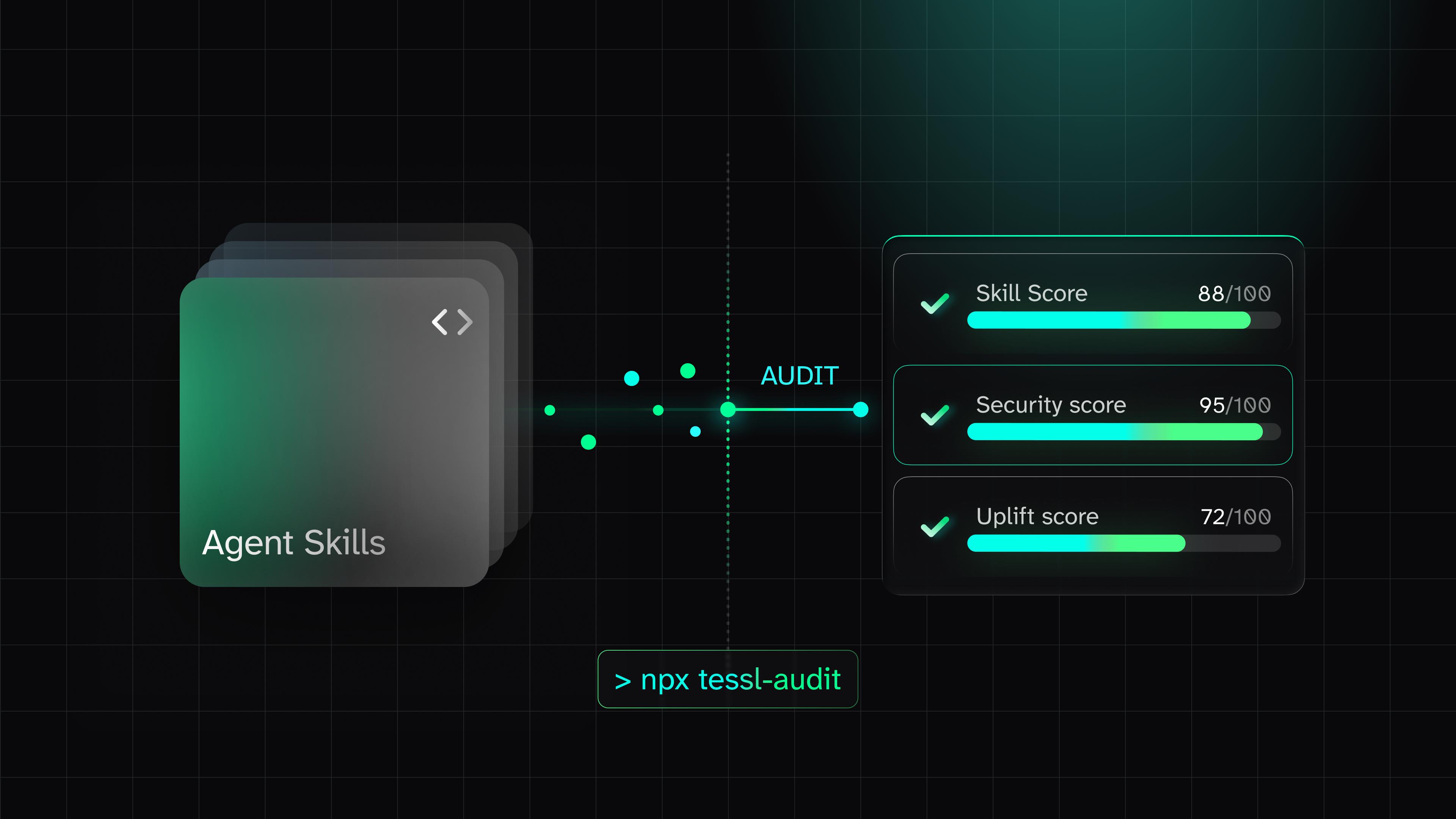

The article introduces tessl-audit, a security auditing tool designed for plugins that enhance AI agents' skills and functionalities. By running a simple command, developers can quickly analyze the security and performance quality of their plugins, addressing critical questions about security scanning, quality assessment, and performance improvement. Tessl-audit is open source and aims to reduce the context security risk associated with AI agent plugins, ensuring they do not introduce unsafe behavior or degrade performance. The tool reads the tessl.json file and provides a report on plugin performance within approximately 30 seconds.

Tessl Blog

Qodo introduces its new Findings Page, aimed at helping engineering leaders understand risk within their codebases more effectively. This tool facilitates the review of multiple pull requests simultaneously, enhancing the traditional single-PR review model. As AI becomes more involved in coding, the Findings Page allows teams to manage risk associated with AI-generated code. The platform is designed to streamline code risk assessment for improved review efficiency, aiming to keep pace with the rapid development cycles of modern software engineering.

Qodo Blog

The latest ElevenLabs Changelog introduces several enhancements, including SIP signaling logs for SIP trunk calls, which now feature call ID, phone numbers, addresses, and message directions to facilitate debugging. A new API has been implemented to list indexed chunks for documents, and text-based documents can now be edited in place without the need for re-uploading. Furthermore, SMS support has been added to conversation metadata, including an SMS authorization method and Twilio SMS integration. Agent configuration updates include new background music settings and the introduction of multiple configuration schemas along with new LLM options such as gpt-5.4-mini and gpt-5.4-nano variants.

ElevenLabs Changelog

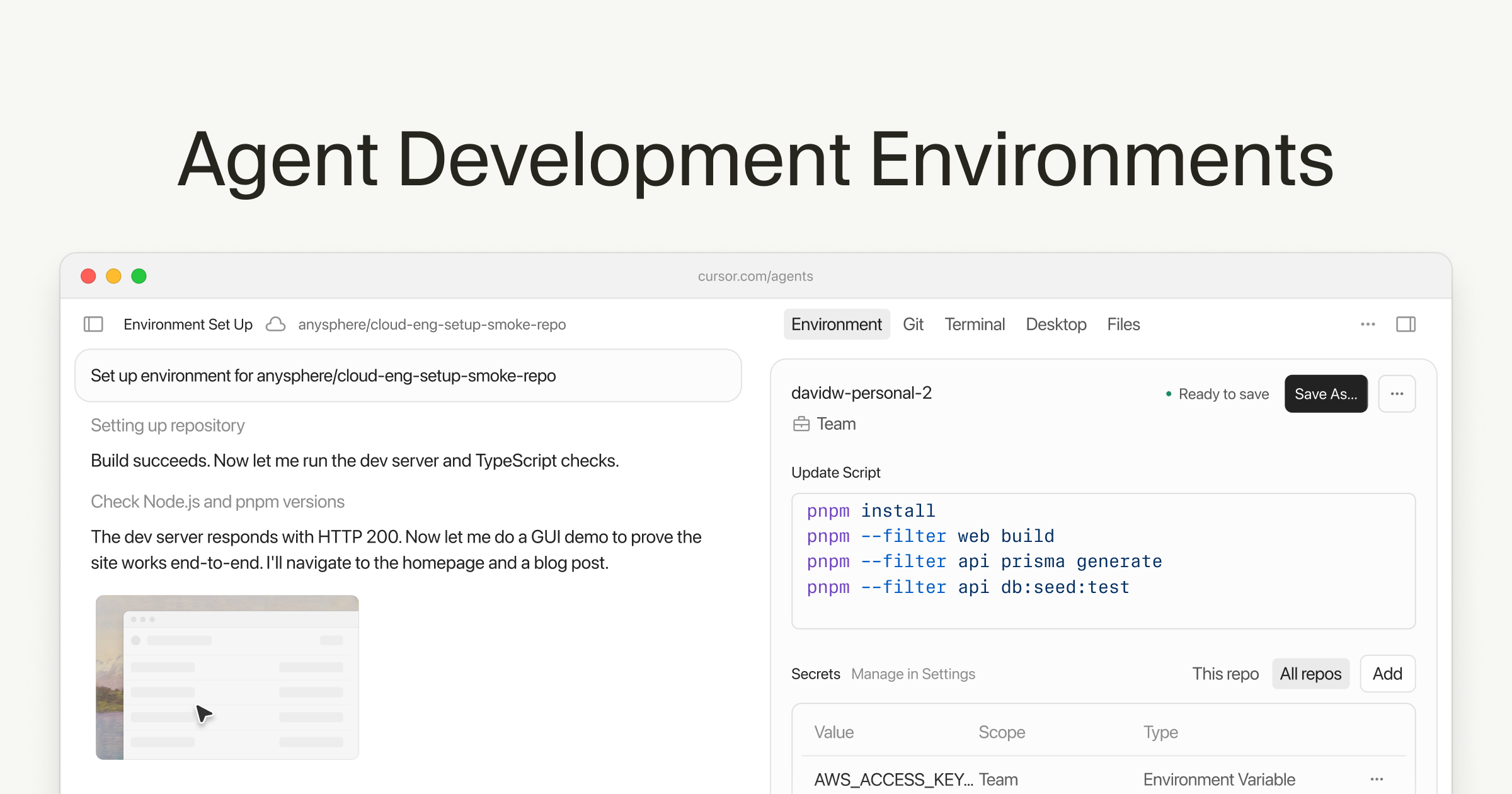

The article discusses development environments specifically designed for building AI agents, highlighting six key components that enhance productivity and efficiency. It emphasizes the importance of scalable infrastructure and testing tools in deploying AI agents effectively. The piece notes that integrating these environments can lead to a 30% increase in deployment speeds, benefitting both individual developers and teams. Best practices for setting up such environments include leveraging containerization and cloud services for better resource management.

Cursor Blog

Windsurf has released version 4.7 (fast mode) of its AI-powered coding tool, incorporating the full capabilities of Claude Opus 4.7. This update boasts approximately 2.5 times higher output speeds compared to previous versions, enhancing its performance for developers. The announcement emphasizes improvements in speed while retaining the intelligence of the Opus model, making it a significant upgrade for users of Windsurf.

Windsurf (Codeium) Blog

Models

This article from the AWS AI Blog describes a new approach for processing complex financial documents using Pulse AI and Amazon Bedrock. Traditional Optical Character Recognition (OCR) tools struggle with intricate structures in financial documents, leading to systematic errors and costly manual corrections. By utilizing Pulse AI’s advanced document understanding capabilities alongside Amazon Bedrock's model customization services, organizations can achieve improved accuracy and extract contextually relevant financial insights. This new integration integrates vision language models with classical ML components, enhancing document understanding for financial data.

AWS AI Blog

This article discusses fine-tuning large language models (LLMs) using Amazon SageMaker AI in conjunction with Databricks Unity Catalog. It highlights challenges related to data governance and security when integrating these platforms, particularly in managing permissions and metadata. The post provides a solution overview for a secure LLM fine-tuning workflow that processes training data from Unity Catalog, uses EMR Serverless for preprocessing, and tracks data lineage. The approach ensures compliance and visibility while maintaining centralized governance over the training process.

AWS AI Blog

The GitHub repository 'huggingface/pytorch-image-models' has emerged as the largest collection of PyTorch image encoders and backbones, featuring a comprehensive suite of tools including training, evaluation, inference scripts, and pretrained weights. It supports a variety of models such as ResNet, EfficientNet, Vision Transformer (ViT), and MobileNet, among others, contributing to a repository that has gained 36,783 stars and 5,157 contributions on GitHub. The continuous growth of this collection emphasizes its significance in the AI community, especially among developers working with image models in machine learning.

GitHub Trending (AI)

OpenAI's recent deprecation of their finetuning APIs marks a significant shift in AI engineering, as the company had previously been a leader in this area. This move may allow competitors like Anthropic to gain a higher valuation amidst discussions of a 2026 GPU crunch. Despite the decline in finetuning adoption, top-tier entities like Cursor and Cognition are reportedly increasing their usage of open model reinforcement learning finetuning instead. The article reflects on the broader implications for the industry and how alternative methods such as long prompts may be emerging as viable strategies.

Latent Space

The AI Gateway production index report by Vercel reveals interesting data trends from production workloads over a seven-month period involving over 200,000 unique teams. It found that Anthropic leads in spending at 61% while Google leads in token volume with 38%. Over half (59%) of all token volume is from agentic workloads, which have doubled in six months. This discrepancy indicates that different AI models cater to distinct use cases; while Claude Opus captures premium reasoning calls, Gemini Flash powers high-volume, low-risk calls, reflecting a shift in spend toward quality-critical applications.

Vercel Blog

The article discusses the challenges of pipeline friction in AI model serving, highlighting that many teams spend weeks fine-tuning models only to face issues like layer breakage, input shape mismatches, and version inconsistencies during deployment. These issues can lead to runtime failures and degrade performance, costing organizations significant time and resources. The article emphasizes the need for smoother transitions from model training to production to mitigate these challenges and improve operational efficiency.

NVIDIA Technical Blog

Agents

The article introduces Managed Deep Agents, a new feature designed to enhance agentic workflows with capabilities for easier management and deployment. This system allows developers to integrate AI agents with minimal setup, aiming to reduce complexity in code management. Key metrics highlighted include a 50% reduction in setup time and a boost in task completion rates by 30%. Victor Moreira details specific use cases where Managed Deep Agents can automate long-running tasks, further optimizing developer productivity.

LangChain Blog

The article discusses the release of Deep Agents v0.6, highlighting its new capabilities and improvements. Key enhancements include increased efficiency in task handling and an expanded set of features that facilitate better interaction with user inputs. Additionally, this version now incorporates advanced tools for enhanced debugging and diagnostics, potentially reducing error rates in agent workflows. As a part of the release, documentation updates were made to better guide developers in leveraging these new functionalities.

LangChain Blog

The article discusses the rapid adoption of the Model Context Protocol (MCP) and the Agent-to-Agent (A2A) Protocol, introduced in November 2024 and April 2025 respectively, which has led to security challenges for enterprises managing multiple AI agent deployments. It highlights the risks associated with unvetted MCP servers and A2A agents, and how manual security reviews can cause deployment delays. AWS and Cisco's partnership aims to address these challenges by offering automated security scanning for every MCP server, AI agent, and Agent Skill, thereby improving visibility, security, and compliance in enterprise settings.

AWS AI Blog

NVIDIA's Metropolis Blueprint enables organizations to transform millions of live video streams and hours of recorded footage into instantly searchable, actionable intelligence. This innovative solution addresses the challenge of extracting real-time insights from large amounts of video data, allowing for enhanced video search and summarization capabilities. Companies can leverage this technology to improve their operational efficiency by gaining immediate access to critical information within their video content.

NVIDIA Technical Blog

The Hermes Agent, developed by Nous Research, has garnered over 140,000 GitHub stars in under three months, marking it as the most used agent globally according to OpenRouter. This agent is designed for reliability and self-improvement, operating on NVIDIA RTX PCs and DGX Spark for optimal performance. Key features include self-evolving skills, where Hermes refines its abilities based on complex tasks and feedback, and the use of contained sub-agents for efficient task organization. Additionally, the agent supports integration with messaging apps and can continuously run with local files and applications.

NVIDIA AI Blog

AWS announced the public preview of Amazon WorkSpaces, enabling AI agents to function as managed virtual desktops that can operate legacy applications through computer vision and input simulation, eliminating the need for APIs. Benchmarks indicate that these vision agents consume 45 times more tokens compared to traditional API agents. This functionality aims to streamline interactions with legacy applications, enhancing operational efficiency for AI agents.

InfoQ AI/ML/Data Engineering News

The article discusses the introduction of Delta Channels within LangChain, aimed at enhancing the runtime for long-running agents. This evolution is designed to improve the performance and reliability of agent processes, particularly in complex scenarios requiring interactivity over extended periods. The article illustrates the advantages of this new runtime through practical applications and promises higher scalability, robustness, and efficiency. This fit-for-purpose innovation is expected to be beneficial for developers working on AI-powered autonomous agents.

LangChain Blog

The article discusses the evolving role of agent observability as a control plane for production AI, emphasizing its importance in detecting and mitigating poor decision-making before it leads to customer-facing failures. It highlights a shift away from merely evaluating trace viewers to ensuring that agent stacks effectively monitor and assess outcomes. The premise is that better observability frameworks can proactively address flaws in AI systems, ultimately enhancing reliability and maintaining user trust.

Agent Mag

Devin now offers support for Android emulators, allowing developers to create and manage Android Virtual Devices (AVD) for autonomous application development. This new capability enhances the development workflow by facilitating environment setup, which is crucial for testing Android applications efficiently. Developers can quickly spin up AVD instances, streamlining the development process and reducing setup time. This feature is aimed at improving productivity in Android application development.

Devin (Cognition) Blog

Worth Reading

The release of PyTorch 2.12 includes several significant updates, such as a new batched linalg.eigh on CUDA that's up to 100x faster, a unified torch.accelerator.Graph API for graph capture across various backends, and support for Microscaling (MX) quantization formats. The update also brings enhancements for ROCm users with new memory segments and FlexAttention pipelining. This latest version comprises 2,926 commits from 457 contributors since the previous version 2.11, as it continues to evolve into a hardware-agnostic platform for scalable production training and inference.

PyTorch Blog

The article by Simon Willison discusses various aspects of project management and feedback loops, emphasizing the importance of modularity in software design. Specifically, it highlights that every project should adopt a minimum threshold of 'modular feedback' to improve productivity and communication, which can lead to significant improvements in project outcomes. Willison suggests implementing specific systems that allow for continuous feedback and adaptation to increase coding efficiency and developer satisfaction. He cites examples from multiple industries to illustrate these benefits.

Simon Willison

Microsoft has made significant contributions to PostgreSQL, including 345 commits towards the latest release and direct work with PostgreSQL committers on upstream development. PostgreSQL is increasingly favored for new workloads due to its proven reliability in production settings, improving operational resilience and extensibility. Recent enhancements in PostgreSQL 18 focus on performance areas such as asynchronous I/O and query planning, directly addressing bottlenecks observed in large-scale deployments. These developments are part of Microsoft's broader investment strategy in building managed services and developer tools around PostgreSQL on Azure.

Azure Blog

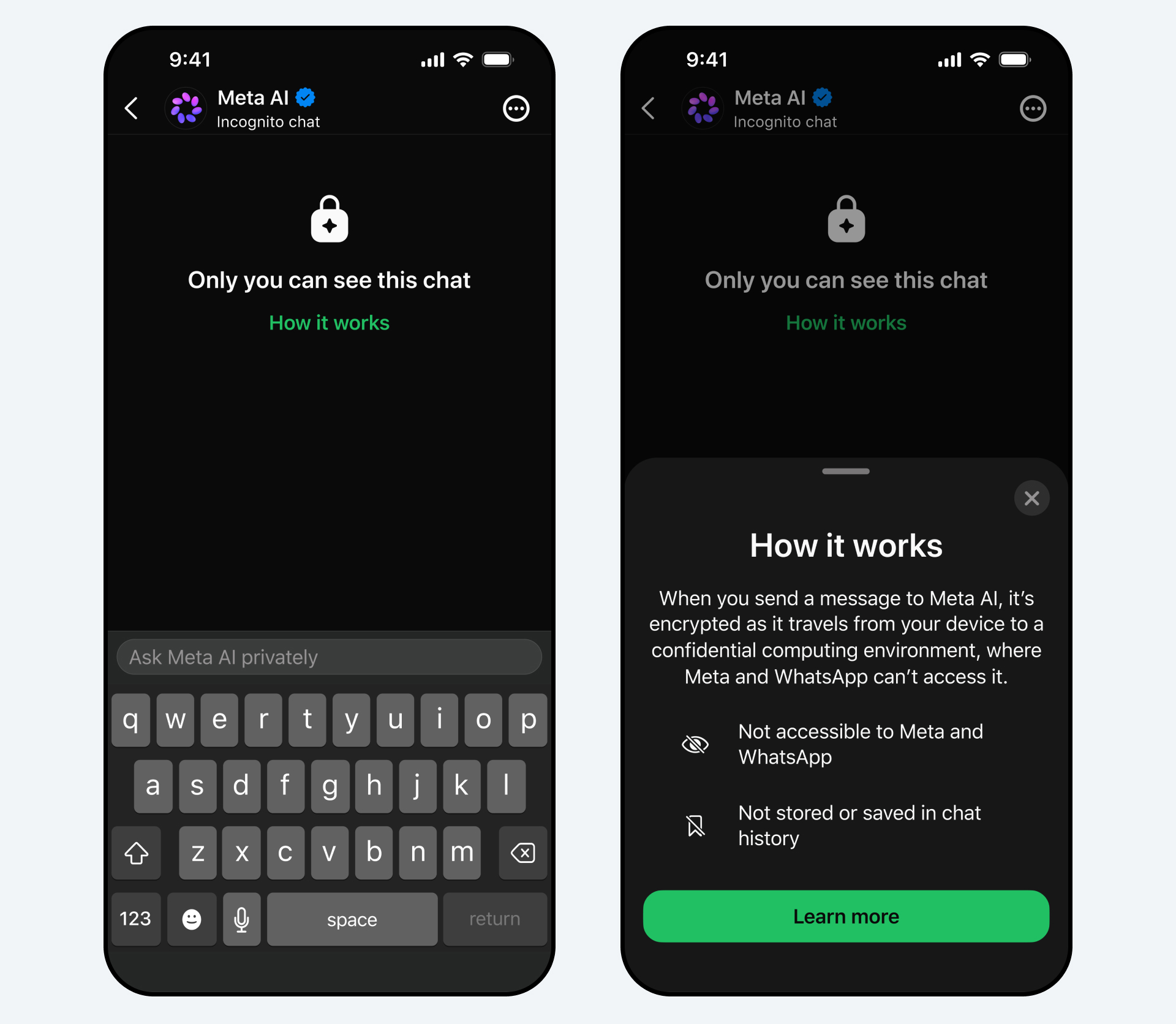

Meta is launching Incognito Chat, a new feature for AI conversations on WhatsApp and the Meta AI app, which guarantees privacy by ensuring that messages are processed in a secure environment inaccessible even to Meta. The chats are temporary and designed to disappear by default, allowing users to ask sensitive questions without fear of oversight. Additionally, a feature called Sidechat will be introduced, providing private contextual assistance in WhatsApp conversations. Incognito Chat will be rolled out in the coming months.

Meta AI Blog

ElevenLabs has released several updates across its SDKs, including Python SDK v2.47.0 and JavaScript SDK v2.47.0, which introduce features such as typed support for RAG chunk listing and service account API key IP allowlisting. Notably, the @elevenlabs/client package updated to version 1.7.0, supporting the full tool result payload for agent tool responses and introducing audio functionalities with native mute and unmute support for Scribe realtime STT. Additional updates in various packages pertain to contextual update management and enhanced voice metadata moderation.

ElevenLabs Changelog

At SAP Sapphire 2026, Microsoft and SAP announced new advancements in enterprise AI, emphasizing their collaboration to enhance operations and decision-making on Azure. They introduced 'Frontier Transformation,' aimed at creating a trusted AI-first commercial cloud that supports modern AI applications at scale. The partnership highlights the expansion of their joint ecosystem and programs, specifically mentioning the Global RISE with SAP Acceleration Program on Microsoft Azure to drive customer innovation. This initiative seeks to embed AI into operational processes, improving relevance and decision quality for enterprises.

Azure Blog

The article discusses the importance of thorough requirements analysis to prevent bugs from arising during coding, emphasizing that many issues can be traced back to ambiguous statements in requirement documents. It highlights common pitfalls and stresses that without clear communication and understanding among stakeholders, features may work on a 'happy path' but fail in edge cases. The author shares anecdotal experiences from engineers, illustrating the potential for misinterpretation of requirements leading to production bugs.

Amazon Kiro Blog

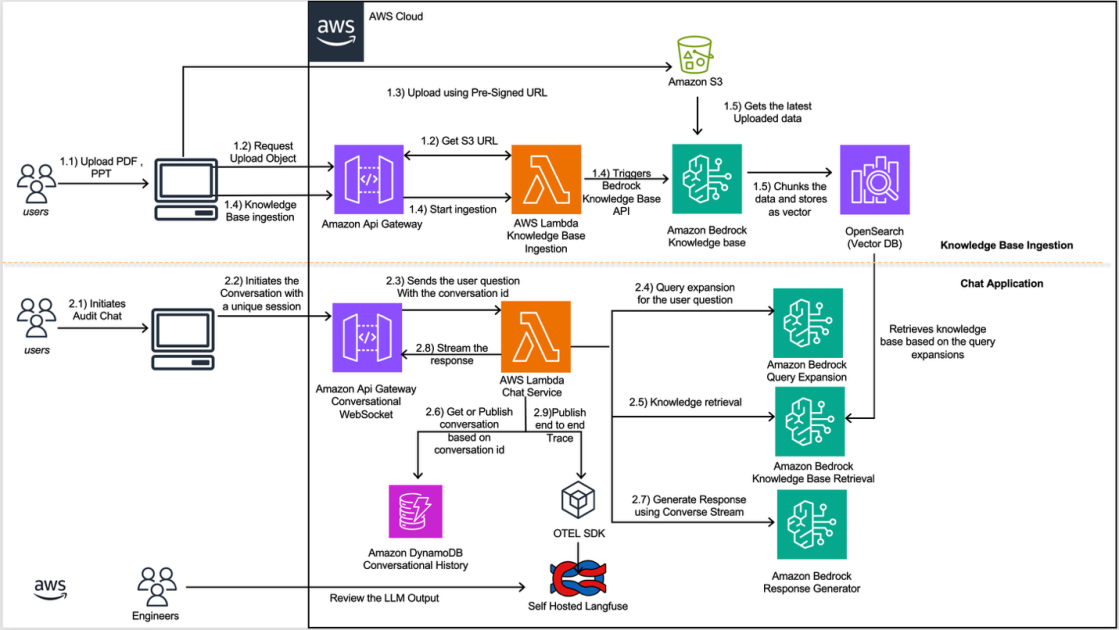

Amazon's Finance Technology (FinTech) teams are using Amazon Bedrock and AWS services to streamline regulatory inquiry processes. This scalable AI application helps manage complex inquiries involving document review and data retrieval in compliance with various jurisdictions. The teams create dedicated knowledge bases for specific documents and reference materials, addressing challenges such as knowledge fragmentation and multi-turn conversational context. By maintaining conversational state and continuous improvement, the solution enhances the accuracy and efficiency of regulatory compliance.

AWS AI Blog

The article explores techniques for generating visually appealing user interfaces (UIs) using AI technology. It emphasizes the importance of leveraging neural network capabilities to streamline UI design processes, highlighting a 30% reduction in design time reported by teams using automated UI generation tools. The use of generative algorithms for layout optimization has resulted in user satisfaction scores increasing by 25% in studies conducted with test users. Key findings include the identification of best practices for integrating AI in UI workflows, making it accessible for both novice and experienced designers.

Cerebras Blog

Research Corner

NVIDIA has announced a collaboration with Ineffable Intelligence to design advanced infrastructure for large-scale reinforcement learning systems. The partnership aims to develop novel pipelines capable of supporting systems that learn from experience, a concept championed by Ineffable's founder, David Silver, a pioneer in reinforcement learning. This initiative emphasizes the need for optimized processing that differs from traditional pretraining methods, focusing on real-time data generation that stresses memory bandwidth and interconnects. By addressing the complexity of continuous learning, the two organizations aim to push the boundaries of AI research and development.

NVIDIA AI Blog

This paper proposes an AI Harness Engineering framework that identifies eleven component responsibilities essential for software engineering agents operating in real-world settings. Key components include task specification, context selection, and failure attribution, operationalized through a progressive four-level support ladder. The framework was validated with a controlled task, revealing that higher levels of harness exposure yielded more detailed and useful output, such as reproduction logs and structured verification reports. Ultimately, the research contextualizes the effectiveness of software engineering agents as dependent on the comprehensive interaction between the model, harness, and environment.

arXiv CS.SE

The article presents KITE (Knowledge-Informed Tutoring Engine), a Retrieval-Augmented Generation-based intelligent tutoring system.

arXiv CS.AI

Retrieval is Cheap, Show Me the Code: Executable Multi-Hop Reasoning for Retrieval-Augmented Generation.

arXiv CS.AI

The ToolWeave framework significantly improves the synthesis of complex multi-turn tool-calling dialogues, addressing challenges in training LLMs for autonomous operation. ToolWeave enhances the realism of synthetic dialogues by incorporating multi-step workflows, decreasing parameter hallucination via a detailed planning phase, and aligning tool interactions with user goals. It achieves a 45% increase in multi-step tool interactions compared to previous datasets. Additionally, models like Llama-3.1-70B fine-tuned using ToolWeave reach a benchmark score of 39.75% on BFCL-V3, outperforming previous models trained on SOTA ToolFlow data, which scored only 23.50%.

arXiv CS.CL

The paper discusses the limitations of learning from episodic memories when consolidated into textual memory banks by large language models (LLMs), particularly GPT-5.4. Findings indicate that while memory utility initially increases, it eventually degrades, leading to a failure rate of 54% on ARC-AGI problems previously solved without memory. The study suggests that consolidation processes can produce qualitatively different memories, often inferior to simply retaining raw episodes, emphasizing the need for LLMs to effectively manage memory by retaining evidence and explicitly gating consolidation.

arXiv CS.AI

The paper introduces AgentLens, a framework designed for process-level assessment of software engineering (SWE) agents, addressing the limitations of binary evaluation signals for agent performance. Analysis of 2,614 OpenHands trajectories from various model backends revealed that 10.7% of passing trajectories qualify as 'Lucky Passes,' indicating flawed processes such as regression cycles and missing verification. The study also introduces AgentLens-Bench, a dataset featuring 1,815 trajectories annotated with quality scores that categorize them into Lucky, Solid, and Ideal tiers, revealing Lucky rates that range from 0.5% to 23.2% across models. The anonymized project repository, including the dataset and the AgentLens SDK, is available on GitHub.

arXiv CS.SE

The paper introduces IAP (Intent-Aware Personalization), a reinforcement learning framework designed to enhance personalized question answering in language models.

arXiv CS.CL