AI Native Medhavi Newsletter ·

From Codex to Copilot CLI: Enhancing Your AI Development Experience

GitHub Copilot App Is Now Available In Technical

Top News

The GitHub Copilot app is now in technical preview, offering a GitHub-native desktop experience for agentic development. It allows developers to start sessions from GitHub issues or pull requests, streamlining the workflow with focused session management and integrated tools for review and testing. GitHub Copilot Pro and Pro+ subscribers can sign up for early access as the technical preview expands, with GitHub Business and Enterprise subscribers gaining access as well.

GitHub Changelog

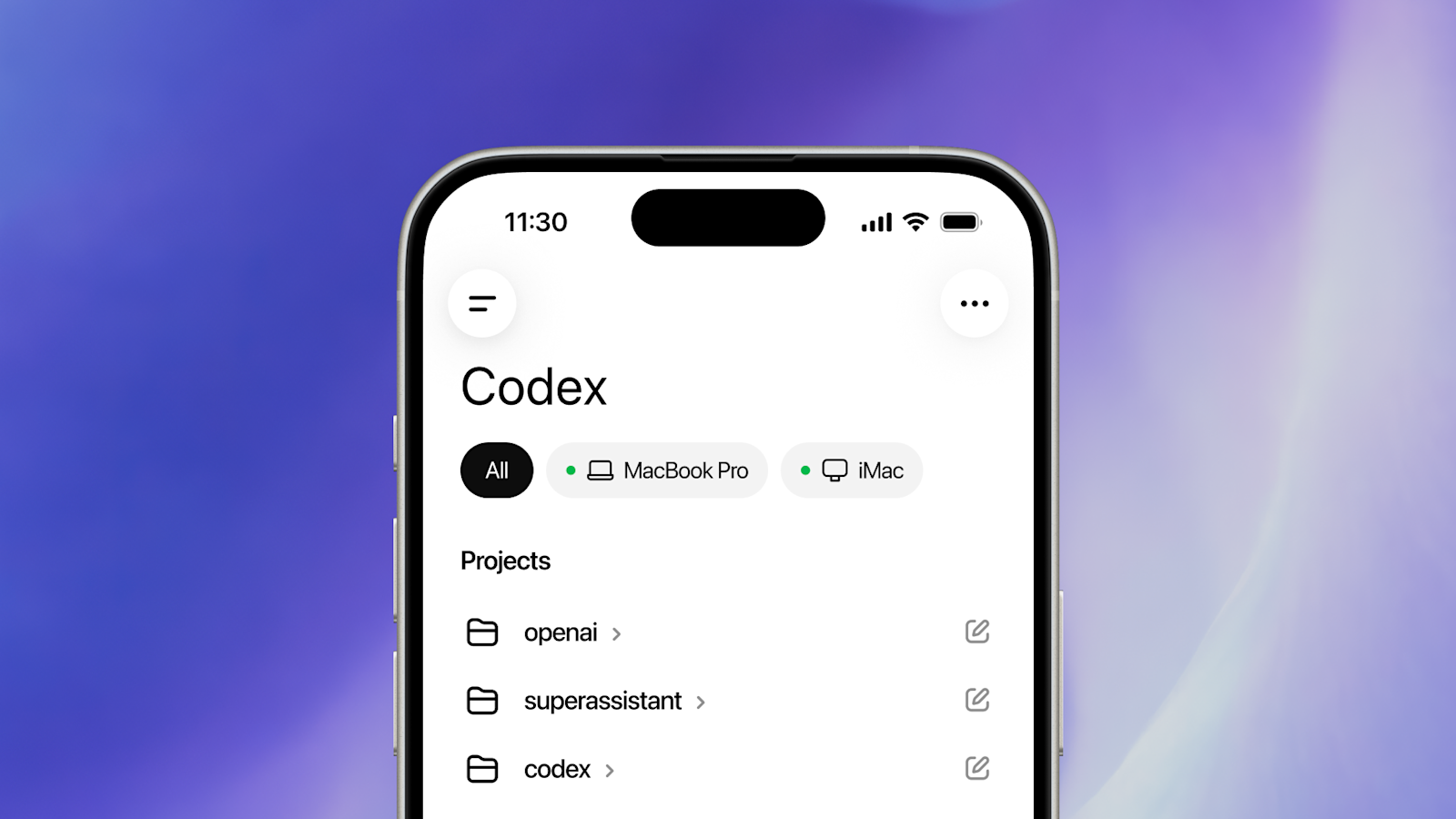

OpenAI introduces real-time Codex management via the ChatGPT mobile app, enabling developers to steer projects from any location.

OpenAI Blog

Following the release of GPT 5.5, there is a noticeable increase in support for Codex among AI Engineers. Anthropic has introduced a new pricing structure for its Claude subscriptions, providing clients with API credits equal to their subscription cost. For example, a $200 subscription grants users $200 worth of API credits to use across various platforms, including programmatic usage of Claude AI. The general reception is cautiously optimistic, though many alternative platform users feel the changes haven't favored their needs adequately.

Latent Space

Anthropic details a postmortem linking complaints about Claude's code quality to recent product changes, ultimately resolving the issues by April 20.

InfoQ AI/ML/Data Engineering News

LangChain has announced the launch of LangChain Labs, aimed at enhancing developer experience and creating a space for testing and building innovative applications using LangChain technologies. The initiative is designed to provide developers with better tools and resources, fostering a collaborative environment to push the boundaries of AI integration in their projects. This new venture comes with a commitment to engage with the developer community through workshops and events, ultimately supporting the growth of the LangChain ecosystem.

LangChain Blog

At the Interrupt event, LangChain announced several new features, including a 35% speed improvement in chain execution, along with new tools and case studies highlighting fast deployment.

LangChain Blog

The NVIDIA Vera Rubin Platform addresses the challenges posed by agentic inference in AI workloads, particularly in managing non-deterministic trajectories that increase end-to-end latency across multiple inference requests.

NVIDIA Technical Blog

Tools & Launches

The Microsoft Agent Framework 1.0 enables the building and orchestration of AI agents, providing multi-agent workflows, A2A protocol interoperability, ensuring every tool call is evaluated against policy with minimal overhead.

Microsoft Agent Framework Blog

The latest release of GitHub Copilot CLI, version 1.0.48, introduces several improvements including a model picker that now displays actual token prices for token-based billing users. The update fixes issues with the display of input text containing CJK characters and emoji, and corrects the token limits shown in the /context command. Moreover, the built-in github-mcp-server is now auto-disabled in Azure DevOps-only workspaces when running in prompt/headless mode, while the /ask dialog has been refined to eliminate unnecessary follow-up replies.

GitHub Copilot CLI Releases

The Copilot cloud agent now includes a feature for auto model selection, allowing users to choose 'Auto' in the model picker. This intelligent system evaluates the health and performance of available models to select the best one for users. By utilizing this feature, users will receive a 10% discount on the normal model multiplier and will not be subject to weekly rate limits. Further details are available in the documentation on auto model selection.

GitHub Changelog

GitHub has introduced the Copilot CLI agent into JetBrains IDEs, featuring a public preview that allows users to delegate tasks to a terminal-based agent with editor context. This update includes a unified sessions view for tracking running and queued sessions, which displays session titles, elapsed time, and status. New capabilities like the 'ask question' tool enable the agent to clarify prompts to improve task accuracy, supported across agent modes. Additionally, GitHub Enterprise Server users will benefit from enhanced sign-in processes and global .agent.md support.

GitHub Changelog

Models

Granite Embedding Multilingual R2 offers an efficient multilingual embeddings model with high-quality retrieval capabilities under 100 million parameters.

Hugging Face Blog

Qwen3-TTS delivers high fidelity in voice synthesis while reducing costs and outperforming previous text-to-speech models in benchmarks.

Baseten Blog

Agents

Discover how to build responsive voice agents using Stream's Vision Agents combined with Amazon's technologies, tackling real-time interaction challenges.

AWS AI Blog

Amazon Bedrock AgentCore now supports Chrome enterprise policies, enhancing security and control over AI agent browsing behaviors.

AWS AI Blog

.webp&w=3840&q=80)

The webinar recap discusses the process of building safe AI agents for enterprise deployment, emphasizing key strategies used in the development. Specific metrics from the presentation include a focus on deployment practices that minimize risks while ensuring effective functionality in corporate environments. ElevenLabs outlines their approach to maintaining safety in AI agents, crucial for enterprise-level applications, and mentions the significance of compliance with industry standards. The discussion also highlights the importance of testing agents in various enterprise scenarios to assess their reliability and performance.

ElevenLabs Agents Blog

ElevenLabs has introduced new modalities for its agent-based framework, ElevenAgents, aimed at enhancing the capabilities and functionalities for developers. This update includes specific improvements to the interaction patterns and behavior of the agents, allowing them to handle a wider range of tasks. The announcement indicates that this new version is set to enable agents to work more effectively in diverse scenarios, enhancing their utility in application development. This marks a significant step towards making ElevenAgents more versatile for developers.

ElevenLabs Agents Blog

Worth Reading

The Secure Code Game allows developers to learn secure coding practices by exploiting vulnerabilities in a gamified editor environment.

AI Native Dev

The article discusses advancements in handling asynchronicity in continuous batching within the Hugging Face ecosystem, focusing on the efficiency and effectiveness of batch processing. It details how these improvements contribute to enhanced resource utilization and reduced latency during model inference. Specific techniques are described, including dynamic batching that adapts to changing workloads, ultimately resulting in up to 30% better throughput compared to standard synchronous methods. The practical implications for developers using Hugging Face's APIs are highlighted, showcasing potential performance gains in real-time applications.

Hugging Face Blog

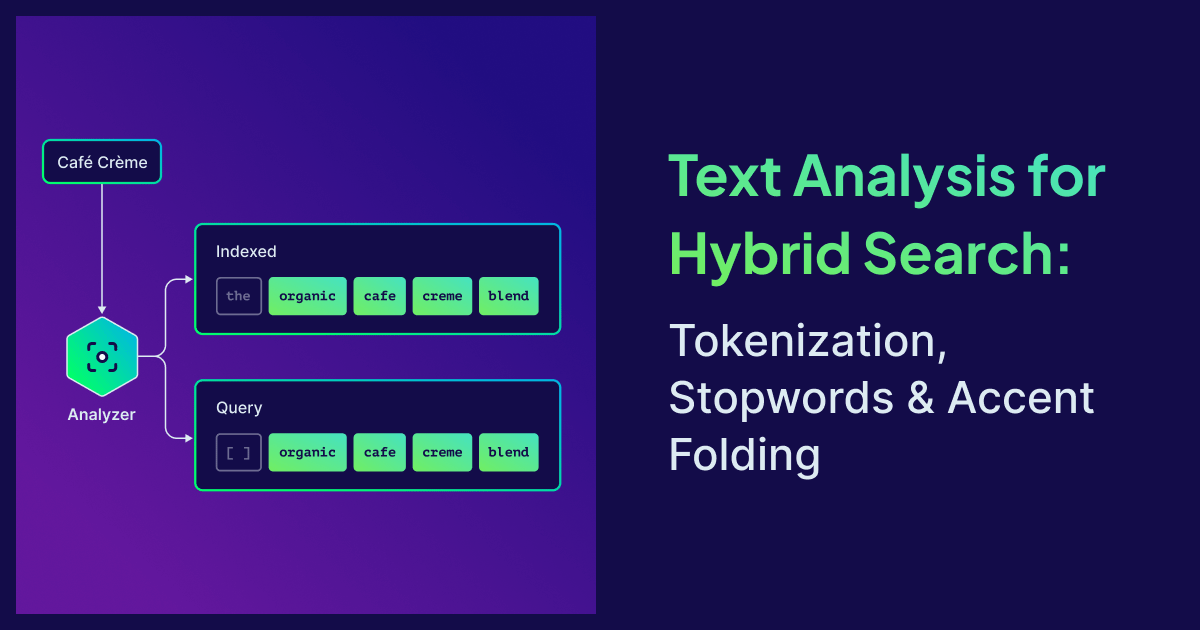

The Weaviate v1.37 update enhances hybrid search capabilities by improving tokenization and multilingual support in vector databases, addressing issues like incorrect token handling that can degrade search quality. The article discusses the importance of tokenization for accuracy in hybrid search, where it fuses vector similarity with BM25 keyword scoring, and introduces new features like accent folding and per-property stopwords to facilitate better search results. Key topics include methods to select the right tokenizer, the significance of token accuracy in search queries, and a new API endpoint for verifying tokenization results.

Weaviate Blog